Payment Terms — Net 60 Days

Buyer shall pay all undisputed invoices within sixty (60) days of receipt of a valid invoice.

Matches Playbook Term 2.1: Payment Terms. Net 60 days falls within the preferred range of Net 30-60.

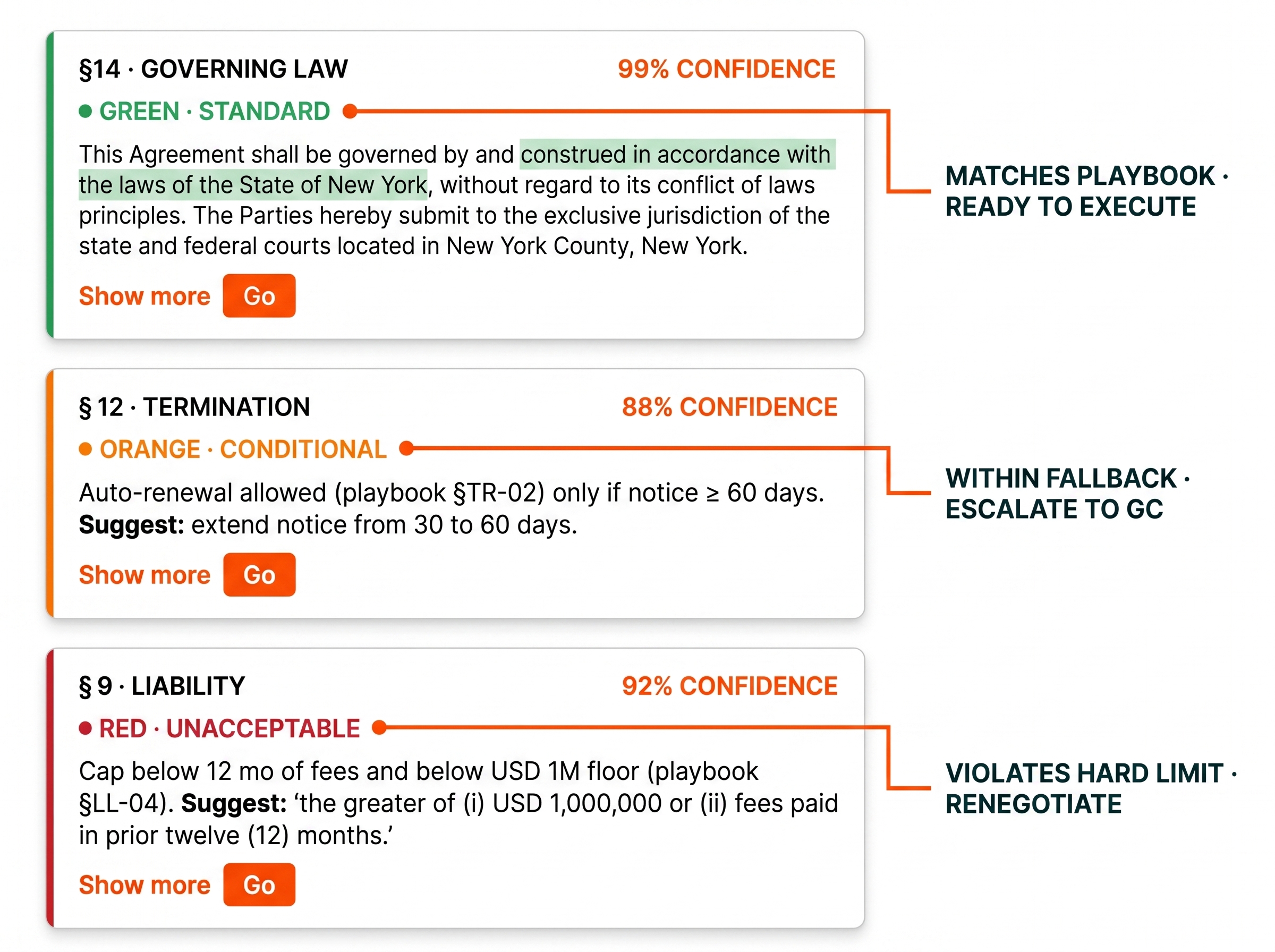

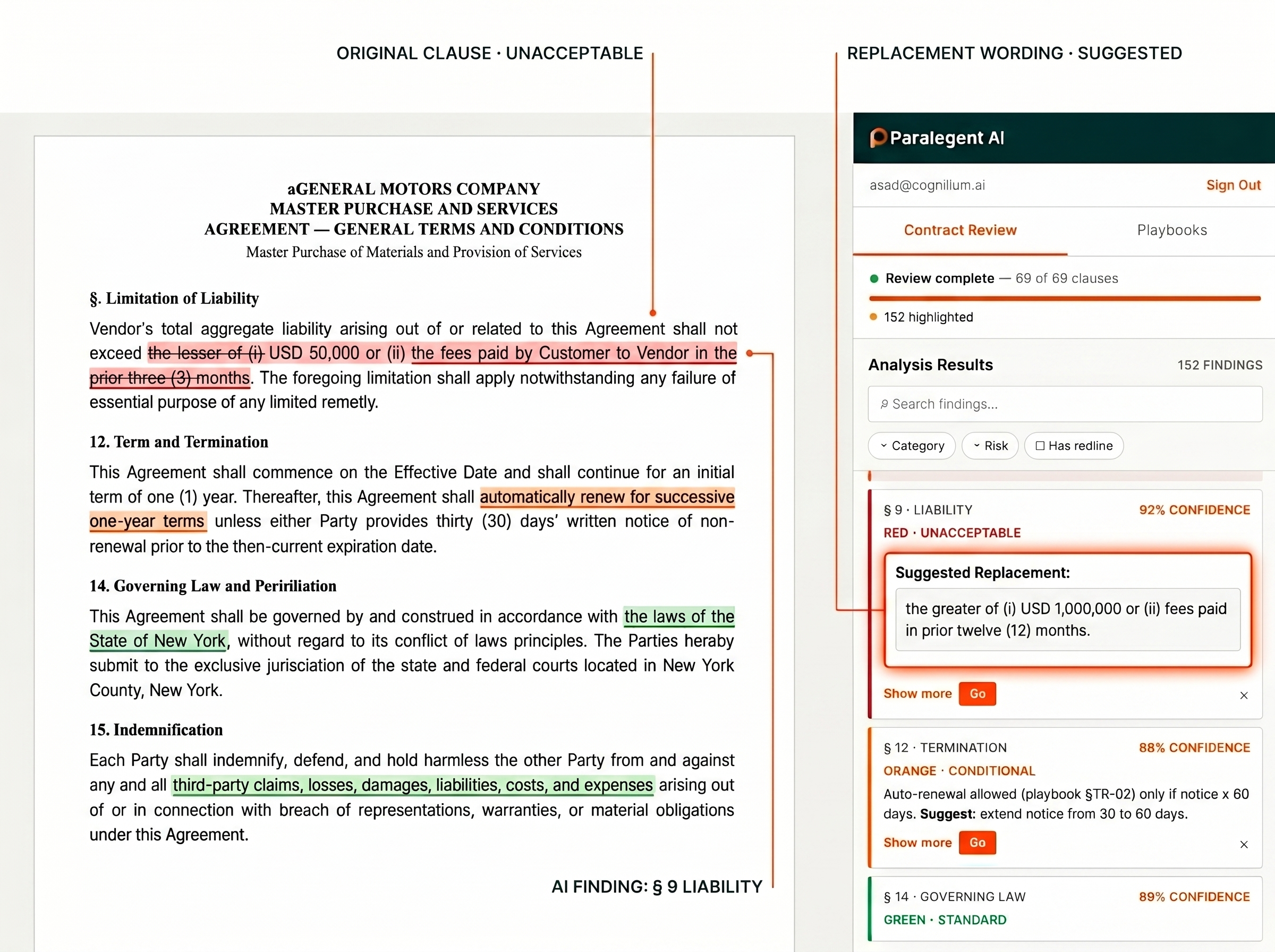

Every clause classified. Every finding explained. Every replacement ready. This is the deliverable your legal team actually uses — inside Microsoft Word, ready for negotiation. GREEN means go. ORANGE means escalate. RED means renegotiate.

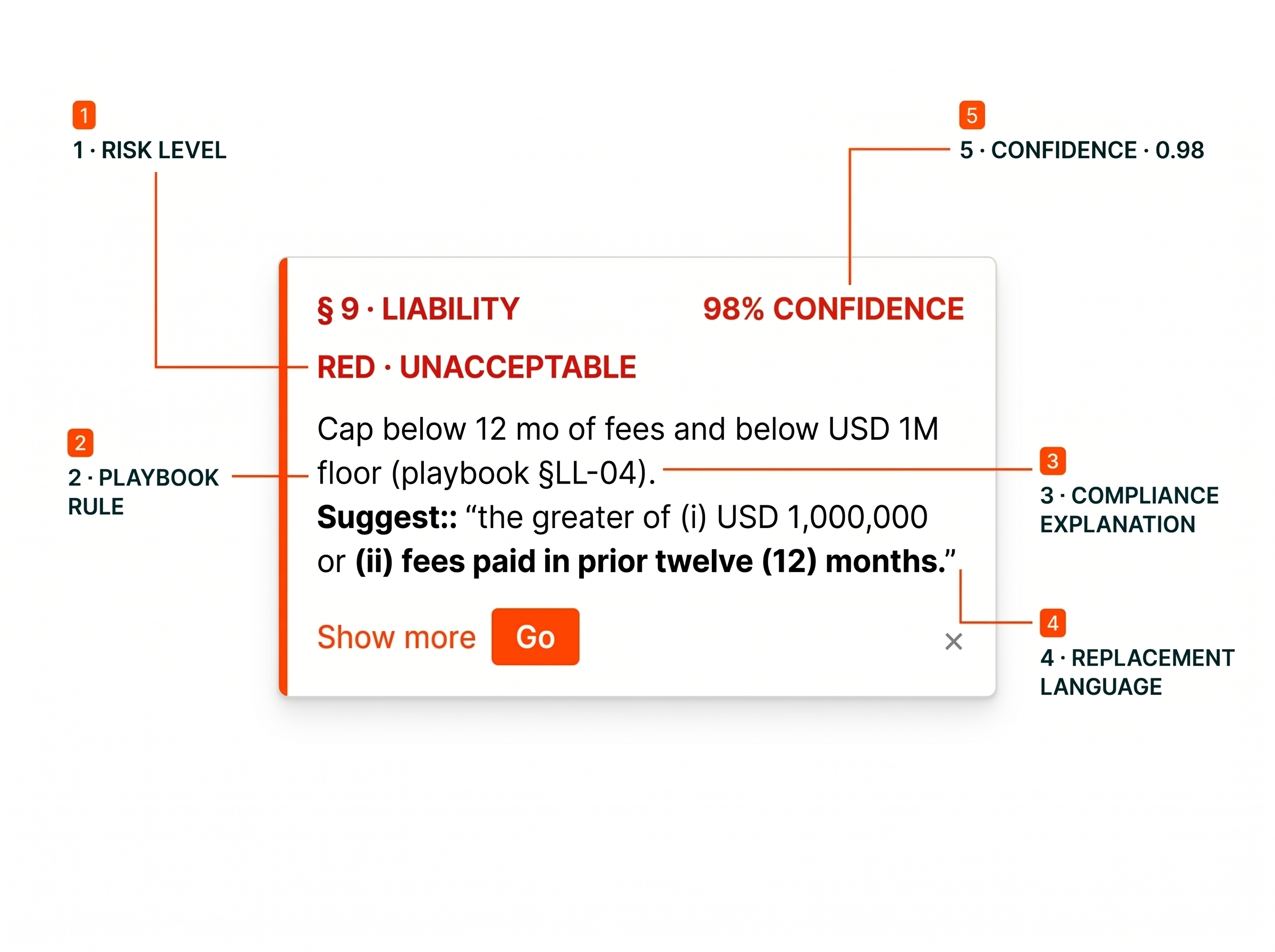

Structured output with 5 fields per finding — not vague AI summaries.

Most AI contract tools produce vague warnings with no playbook traceability, no classification, and no replacement language. Paralegent AI delivers 40-50 findings per MSA — each with the specific playbook rule violated, a plain-language explanation, and your approved replacement text. The output is the same whether a junior associate or senior partner runs the review.

The 3-tier classification system maps directly to action. GREEN means the clause matches your playbook — no action needed. ORANGE means it deviates from preferred but falls within your fallback threshold — route to senior counsel. RED means it violates hard limits with no fallback — renegotiate immediately. No ambiguity about what to do next.

Every finding includes: (1) Risk level — GREEN, ORANGE, or RED. (2) Playbook rule — the exact term from your 80-150-term playbook that was violated. (3) Compliance explanation — plain-language reasoning. (4) Replacement language — your approved preferred or fallback wording. (5) Confidence score — 0.0 to 1.0 certainty. Teams use this structure to triage 40-50 findings in under 30 minutes.

Every replacement suggestion comes from YOUR playbook — preferred positions for RED findings, fallback positions for ORANGE findings. Not generic AI suggestions from training data. Your approved wording, applied consistently across every contract, every reviewer. Copy-paste ready in the Microsoft Word sidebar with a single click.

Three real-world examples showing the full anatomy of a Paralegent AI finding — GREEN (favorable), ORANGE (conditional), and RED (unacceptable). Each includes the playbook rule, explanation, replacement language, and confidence score.

Buyer shall pay all undisputed invoices within sixty (60) days of receipt of a valid invoice.

Matches Playbook Term 2.1: Payment Terms. Net 60 days falls within the preferred range of Net 30-60.

Supplier warrants that the Products shall be free from defects in materials and workmanship for a period of three (3) years from the date of delivery.

Exceeds preferred 2-year warranty (Playbook Term 5.2: Warranty Period) but falls within fallback threshold of 3 years.

“Supplier warrants the Products shall be free from defects for a period of two (2) years from the date of delivery.”

Supplier's liability under this Agreement shall be unlimited and shall include all direct, indirect, and consequential damages.

Violates Playbook Term 4.3: Limitation of Liability. Hard limit is 12 months of fees paid — no fallback exists.

“Aggregate liability shall not exceed 12 months of fees paid; consequential and indirect damages excluded.”

What you actually receive matters more than how the AI works. Compare the output quality — not just the technology.

| Paralegent AI Redlines | Generic AI Output | Manual Attorney Notes | |

|---|---|---|---|

| Findings per MSA | 40-50 precise | 10-20 vague | 15-25 (varies by reviewer) |

| Risk Classification | GREEN/ORANGE/RED | Pass/Fail or none | Subjective notes |

| Playbook Traceability | Exact rule cited per finding | No playbook | Reviewer memory |

| Compliance Explanation | Plain language per finding | Generic warnings | Expert judgment (varies) |

| Replacement Language | From your playbook — preferred + fallback | Generic suggestions | Ad hoc drafting |

| Confidence Score | 0.0-1.0 per finding | None | None |

| Summary Report | Risk distribution + category breakdown | Basic list | Manual compilation |

| Consistency | Identical across all reviewers | Model-dependent | Varies by attorney |

| Delivery Time | 2-8 minutes | 5-10 min | 20-30 hours |

| Approval Routing | Auto-routed by tier + confidence | None | Manual escalation |

Five outcomes from a structured output format that goes beyond pass/fail.

Request a demo — we review one of your MSAs live and show every finding with classification, replacement language, and confidence score.

Common questions about the classification system, confidence scoring, replacement language, and how to prioritize 40-50 findings efficiently.

Paralegent AI produces 40-50 precise redline findings per 80-page MSA. Each finding includes a 3-tier risk classification (GREEN, ORANGE, or RED), the specific playbook rule violated, a compliance explanation, AI-generated replacement language, and a confidence score.

Every finding has five components: (1) Risk level — GREEN, ORANGE, or RED classification. (2) Playbook rule — the specific term from your playbook that was violated. (3) Compliance explanation — why the clause was flagged, in plain language. (4) Replacement language — alternative wording from your approved positions. (5) Confidence score — how certain the agent is about the classification.

GREEN means the clause matches your playbook's preferred or acceptable position. No action is required — the term is favorable and ready for execution. Example: "Payment within Net 60 days" when your playbook allows Net 30-60 as the preferred range. GREEN findings confirm alignment.

ORANGE means the clause is conditionally acceptable — it deviates from your preferred position but falls within your fallback threshold. It typically requires senior counsel or General Counsel approval before execution. Example: a 3-year warranty when your standard is 2 years but your fallback allows up to 3 years.

RED means the clause is unacceptable — it violates your playbook's hard limits with no fallback position. These are deal-breakers that must be renegotiated before signing. Example: unlimited liability when your playbook caps liability at 12 months of fees. RED findings always include replacement language.

Each finding includes a confidence score from 0.0 to 1.0 indicating how certain the AI agent is about its classification. High-confidence RED findings (0.85+) should be prioritized for immediate legal review. Borderline ORANGE findings (0.50-0.65) may need human judgment to determine the final classification.

Replacement language is sourced directly from YOUR custom playbook — not generated from generic AI training data. Each playbook term includes preferred positions and fallback language. When a clause is flagged, the system suggests your approved alternative wording, ensuring consistency across all contracts.

The summary report shows the overall risk distribution (how many GREEN, ORANGE, and RED findings), a category breakdown across all 18+ legal categories, findings sorted by confidence score, and an approval chain recommendation — which findings need GC approval versus standard review. Full analysis completes in 2-8 minutes.

Yes — your playbook defines the thresholds. You control which terms are GREEN (auto-approve), ORANGE (conditional with senior review), and RED (must renegotiate). The confidence threshold for escalation is also configurable per legal category, so your team reviews only what matters.

Teams prioritize by combining risk level and confidence score. High-confidence RED findings (0.85+) are reviewed first — these are clear deal-breakers. Then ORANGE findings sorted by confidence. GREEN findings are confirmed quickly. Most teams complete full review of all 40-50 findings in under 30 minutes.